Whenever you see a story about an algorithm, replace buzzwords like “machine learning,” “artificial intelligence” and “neural network” with the word “magic.” Does everything still make grammatical sense? Is any of the meaning lost? If not, I’d be worried that something smells like bull. So how can you tell the bad from the good? There’s a quick trick I use to weed out suspicious examples. The helpfulness of algorithms varies drastically. To prevent abuse of these phrases, I recommend following Hannah Fry’s litmus test, from her book, Hello World: Being Human in the Age of Algorithms: It at least passes the sniff test.Ī lot of eye rolling comes when terms like Artificial Intelligence or Machine Learning are used. I’ve got somewhat of an ML background, and other than the use of “AI”, the product literature seems to describe a pretty straightforward, but novel approach to ML. In the end, DeNoise is probably a sophisticated pattern recognizer that associates noisy inputs with the most “learned” clean output, then maps the color back in. Depending on which ML model they used, DeNoise AI likely developed a pattern recognition model based on neural nets or probabilistic models based on Bayes or Markov. They trained the software with several million images containing noise, along with several million clean images.

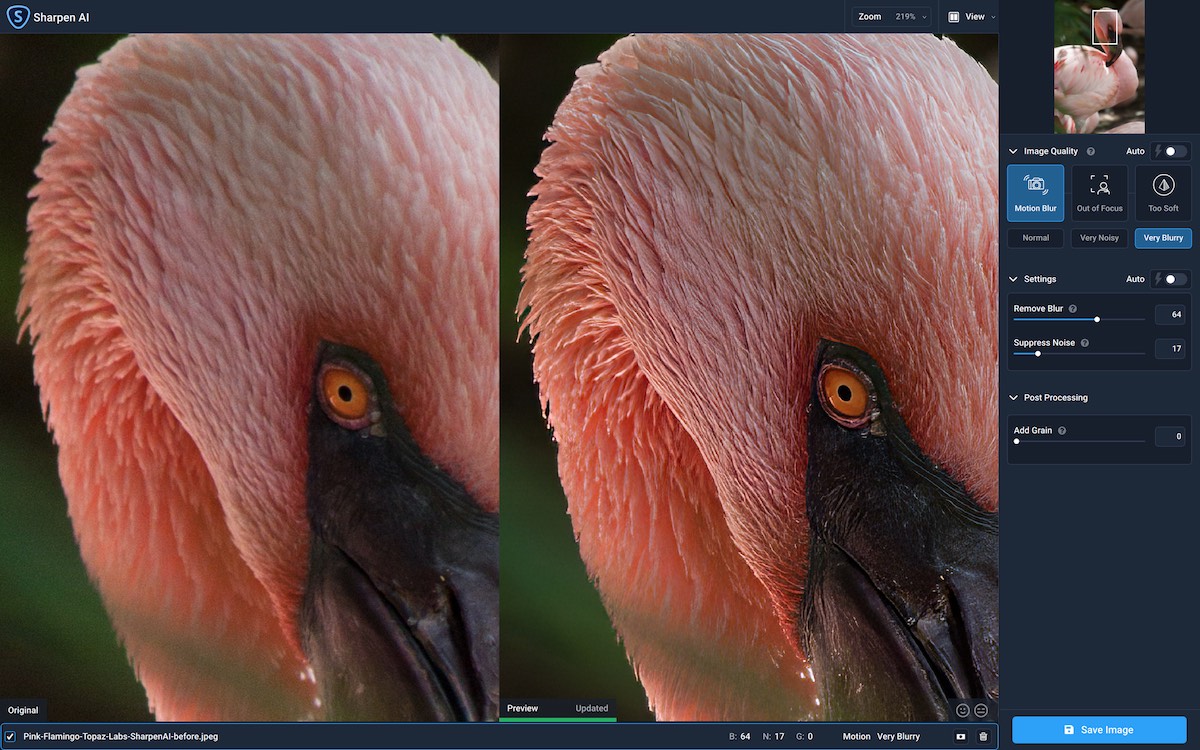

You’ll want to click on the images to zoom in so you can see the dramatic difference this tool makes.ĭeNoise AI claims to use machine learning as a means of identifying noise. I’ll show you some comparisons to other NR techniques I’ve used in this post. My wife and I have been chasing the Northern Lights for several years now, and trying to clean up our shots has always been a challenge. Noise reduction has been the bane of my existence ever since I started astrophotography. After seeing the results turn out so good, I needed to take some time to talk about this tool. Topaz Labs recently released DeNoise AI, a noise reduction tool for photographers, and so naturally I picked up a copy after reading about a sale on Nikon Rumors. If you use x265, just set profile to automatic.Īs for the filters from AVISynth and VapourSynth, some unfortunately have color space restrictions that require to add a conversion before using them.Topaz Labs DeNoise AI review by Jonathan Zdziarski ( 500px) – see the original blog post for more sample photos ( DeNoise AI coupon code is available here): But If you are using x264 codec, make sure you choose vspipe y4m in your input/output options sections, and try to set profile as automatic. In all fairness, your best bet for now is to use that 10% correction that seems to fix it, in the hope Topaz Labs will investigate this.Īs for Staxrip, my version (2.1.7.0) opens the generated ProRes files without any issue. Now I still can’t replicate a similar situation, and even had to face the opposite, until I realized MPC-BE player does not handle color space properly when trying to play ProRes.

But you are indeed correct when considering the result should reflect what the preview shows you - for obvious reasons. I realize that my perception bias tells me your ProRes output looks better, and that is wrong.įirst let me tell you that you did indeed exaggerate : there is no way you can consider this as having burnt whites or crushed blacks. As you know video quality has a strong subjective aspect (beyond obvious objective quality aspects). I think I know understand why I didn’t notice the problem before : simply because of perception of quality. IN some videos i like to have that extreme soft wax skin look, on others i like it ultra sharp and real. My mother likes the same movie with color boost settings at 100% Too bright, too dark, to sharp, not sharp enough, too much color, not enough color… almost every video is different. I must confess when i zap through my collections, i often have to change my TV/player settings for each video individual. Some users recommended disableing any digital enhancements on TV’s.Īnd yes it makes a difference when i watch my videos direct from pc/kodi with hdmi to the TV/Screen OR from USB stick in the TV OR as a stream from kodi on the same pc (network(not hdmi) Now the question is HOW do you watch your results/videos?Īre the player settings correct? ALL Color settings, not just saturation. On smaller screens many videos look better than on on biscreen/fullscreen.

Remember the upscaling control screen on the right is much smaller than the final viewing in fullscreen. My results look good during upscaling AND when i watch them on my pc with kodi on a 82" QLED TV.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed